Choosing to advertise on Facebook seems like a no-brainer; with 2.27 billion monthly active users, Facebook is the widest-reaching social network in the world.

When it comes to display ads, however, you may not be reaching as many users as you think.

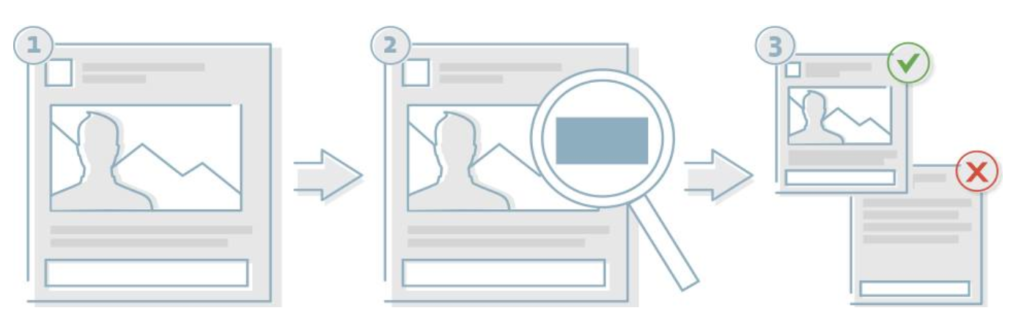

Facebook reviews ads that run on their platforms — namely, Facebook, Instagram, and Audience Network — to measure the amount of text used in each ad image.

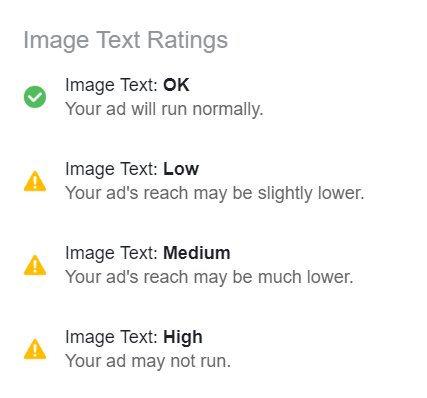

Facebook found that images made up of less than 20% text perform better than those with heavy text, so ads with higher amounts of image text may be shown to a smaller audience — or not shown at all.

Quick note: Some ad images may qualify for an exception. For example, book covers, album covers, and product images tend to naturally have more text associated with them, so Facebook often filters them differently.

If those categories apply to you, then these testers aren’t necessarily good tools for you. To learn more about common exceptions, check out this Facebook help article.

How does Facebook review ads?

Facebook reviews different ad formats in different ways. For single image ads, the image is reviewed to analyze if it complies with the image text guidelines.

- For carousel ads, each individual image is analyzed for compliance.

- For video ads, the thumbnail is reviewed to make sure it doesn’t contain too much text.

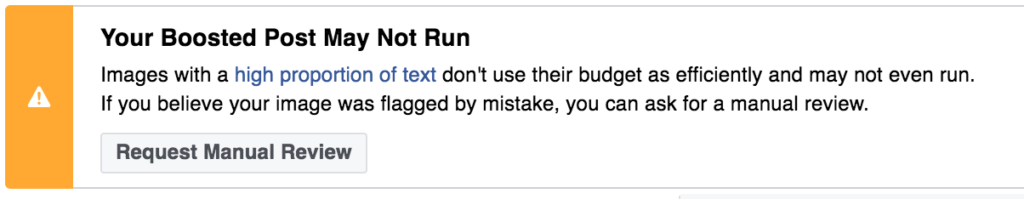

When creating your ad, Facebook will automatically check whether the ad image(s) contains too much text. If it does, you may get a message flagging the issue and giving you the option to request a manual review.

If you think your ad qualifies for an exception or may have been flagged incorrectly, Facebook recommends selecting the Request Manual Review option.

5 Facebook ad image testers:

To help you make sure your ads can reach as many people as possible, we rounded up four Facebook ad text testers that analyze your text-to-image ratio.

Here they are:

Official Facebook text overlay tool

Facebook’s official ad text tester allows you to upload an image to determine how much text is in your ad image.

Facebook warns that while the tool may not be 100% accurate, the tool can be a guide to help you create ads that are the most likely to comply with Facebook’s guidelines. You can access the tool here.

Social Contests Grid Image Checker Tool

The Facebook ad grid tester from Social Contests allows you to upload your ad image, then overlays a grid on your image.

By having you click on each grid square that contains text, the tool calculates a rough text-to-image ratio to help you determine whether your ad image meets Facebook’s guidelines.

Facebook Grid Tool

Another simple Facebook ad grid tester, this tool from facebookgridtool.com works similarly to the one above: you upload your image, then select each square that contains text.

If too much of your ad is made up of text, you’ll receive a message saying, “Oh no! Your image exceeds Facebook’s 20% or less text rule.” If your ad passes the test, you’ll receive a congratulatory message instead.

Facebook Ads 20% Image Grid Template

If you’d rather check out your text-to-image ratio in your image editing software, you can use Content Harmony’s image grid template, a transparent grid in .png form. The template is 1200 x 628 pixels — the generic post type template — and easy to use.

Simply place it over your ad image, then count the number of squares that contain text. If the number is lower than five, you’re good to go; if not, try to minimize the amount of text in your ad in order to pass Facebook’s ad reviews.

Final Takeaways

These tools can help ensure you are reaching as much of your target audience as possible by meeting Facebook’s ad guidelines.

If you find you have too much text in an ad image, consider using fewer words or reducing the font size of your text (while keeping it legible, of course).

You can also leverage the status update field to include most of your text there rather than directly on the ad’s image. There are definitely ways to tell a great story via your ads without using too much text in ad images.

You Might Be Interested In