In the last year or so, brands and retailers have been jumping on the visual search bandwagon in droves.

Capitalizing on the huge number of mobile users out there (just under 5 billion) and the popularity of photo-based platforms like Snapchat and Instagram, visual product search turns the average smartphone into a virtual shopping tool.

FREE SURVEY: Amazon Consumer Survey 2017

But if visual search is still a bit of a black box to you – then you’ve come to the right article. In the following post, we’ll cover everything from the basics of visual product search to an exclusive interview with Slyce, the brains behind Tommy Hilfiger’s groundbreaking visual shopping experience: “Tommyland“

What is Visual Product Search?

Just point your mobile device at a real-life item or take a screenshot, and you can easily find the products you want without ever knowing their name, brand or price point. It takes the idea of impulse buying to a new level, making online shopping easier, faster and more interactive than ever before.

There’s a lot of psychology and basic physiology involved in why visual search is so effective.

For one, people are said to process images about 60,000 times faster than they do text; that in itself points to visual being more effective than traditional search techniques.

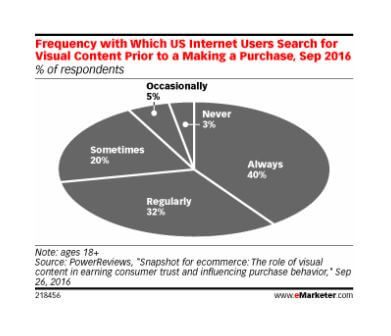

There’s also the fact that the web has created more educated, empowered and research-driven consumers—consumers who, according to a recent eMarketer Study, almost always look for visual content to back up a purchase before handing over their cash.

In fact, that same study shows that 97% of today’s consumers conduct some form of visual research before buying a product.

But there are other reasons why visual product search works so well, and a lot of it boils down to convenience. Consumers can literally be anywhere in the world to use it, and all they need is their mobile device (which studies show most people check about 80+ times a day.)

There’s no tedious typing or sorting through categories of products, and it virtually cuts out the middleman. They get a direct line to the products they’re actually looking for, and there’s no wasted time between inspiration and purchase opportunity.

The whole process is seamless, and the consumer is able to find, buy and finalize their purchase in just a matter of seconds.

A few additional stats to note:

- According to 74 percent of consumers, text searches are inefficient in connecting them with the right products (Slyce)

- The average shopper abandons a product search after 1 minute and 30 seconds (Visenze)

- Only about 3.4 percent of shoppers can find the specific product they’re looking for in under 6 minutes (Visenze)

- The product image recognition market is projected to grow 216% by 2019, hitting a value of $25.65 billion (Markets and Markets)

Who is using Visual Search?

Visual search is still fairly new, but the number of brands and retailers who have already caught on is pretty shocking. Most programs are still in testing and beta phases, though a handful (Pinterest Lens, for example) are available right now.

Here’s a look at a few of the visual product recognition search tools that are out there (or on the horizon):

Pinterest Lens:

Pinterest Lens lets you use your mobile phone camera to search not only Pinterest posts, but also related images outside of the platform.

According to Evan Sharp, co-founder and head of product at Pinterest, “Sometimes you spot something out in the world that looks interesting, but when you try to search for it online later, words fail you.”

“You have this rich, colorful picture in your mind, but you can’t translate it into the words you need to find it. At Pinterest, we’ve developed new experimental technology that, for the first time ever, is capable of seeing the world the way you do. Now there’s a way to discover ideas without having to find the right words to describe them first.”

Using the Lens app, you can point your mobile camera at a pair of shoes in a store, then tap to see related styles or even ideas for what else to wear them with.

According to the announcement, Lens works best for finding home decor ideas, things to wear and food to eat. One of the most interesting uses of Lens is what it can do with food; just point it at an ingredient, and the app will display potential recipes that use it.

Pinterest said they will continue making improvements to their technology, and anticipates the range of objects Lens recognizes will get increasingly wider.

eBay Image Search

eBay has two different visual search tools in the works:

-

- eBay Image Search

- Find it on eBay

The former works like other tools, letting you take a photo or use one from your camera roll to find eBay listings that match the image.

The latter, however, is designed to work with other image searches. Just plug in a photo link from Google, Twitter, Facebook or any other site, and the tool will connect you with related listings.

Google Lens

A little different than other visual search options, Google Lens doesn’t require you to take or even upload a photo. Simply use your phone to scan an item in the real world, and Google’s AI will help you take action based on what that item is.

It could connect you to sites to buy related products on, or it may give you directions on how to take care of the flower sitting in front of you.

An example Google gives for potential uses?

Use your phone’s camera to scan over a nearby router. The tool will then give you the option to tap and connect to its Wi-Fi instantly.

Just a heads up: There’s currently no set-in-stone release date for Google Lens.

Amazon Visual Search

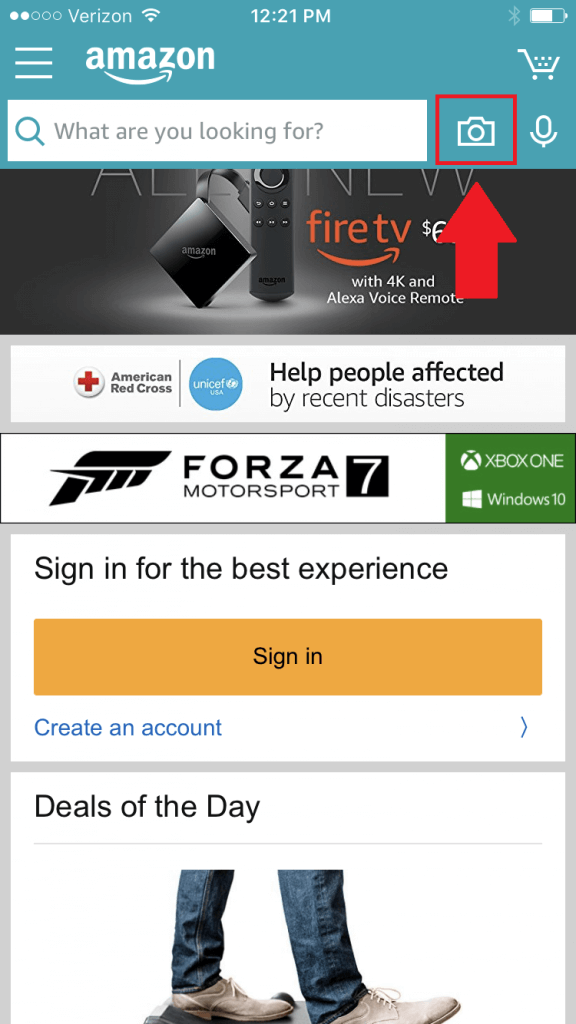

Though Amazon technically had its “Amazon Remembers” visual search tool for media back in 2009, the retailer is now offering a larger-scale product image recognition capability that works much like other rival tools.

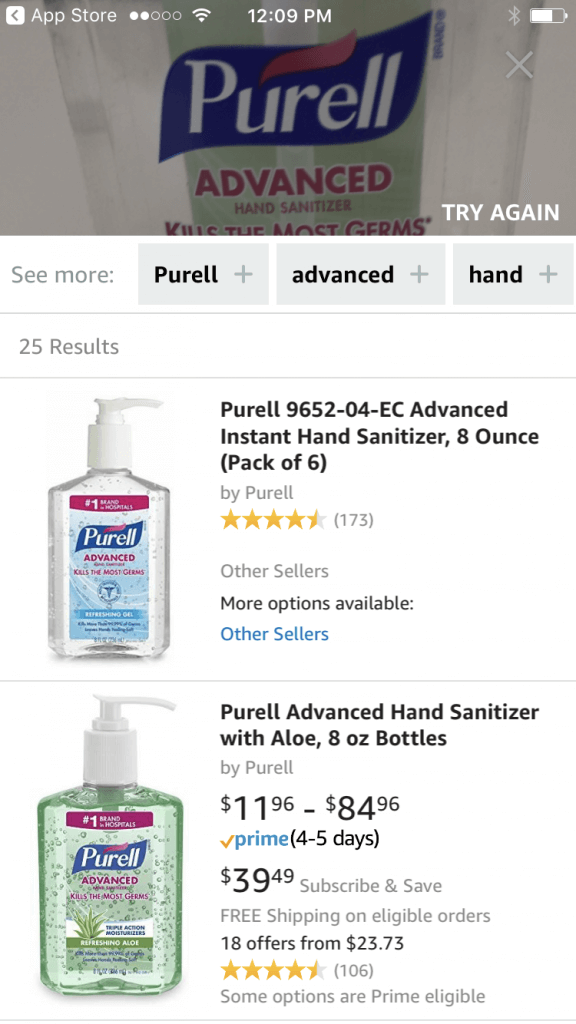

Users can point their camera at real-life outfits, products, barcodes or just about anything and easily pull up Amazon product’s that match or relate.

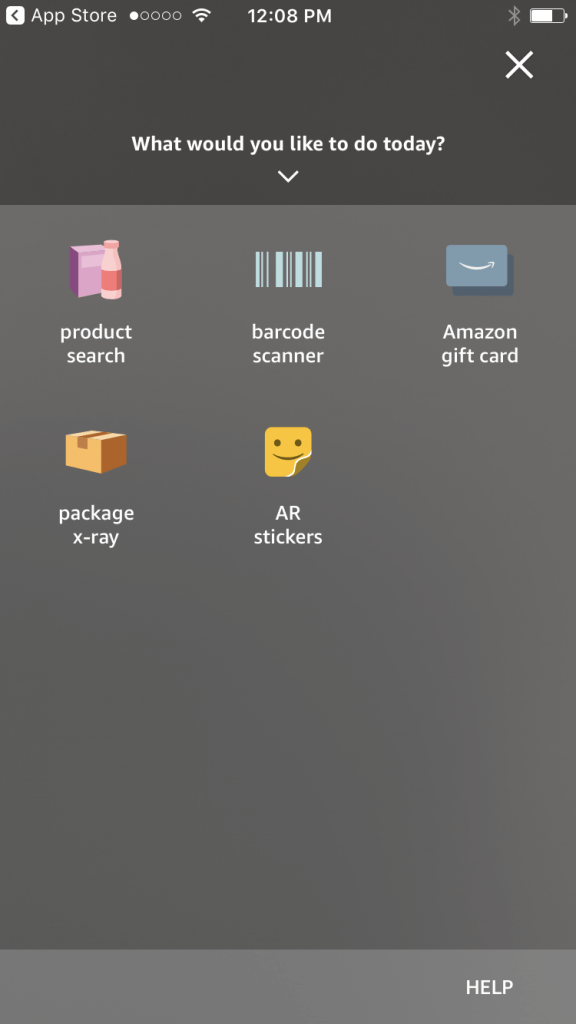

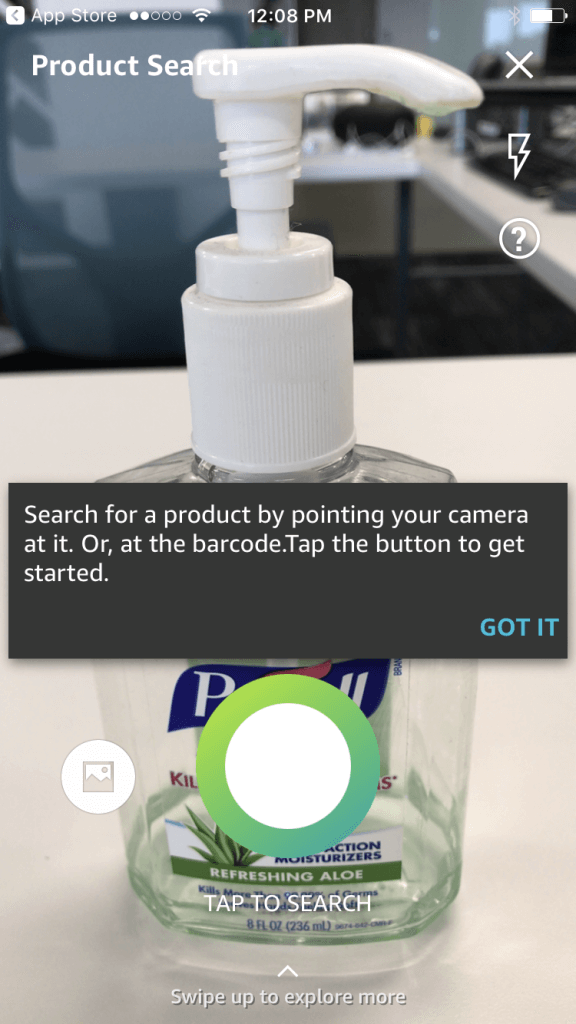

Below is a quick walk through of the Amazon mobile visual product search process:

Step 1: Open the camera icon on the Amazon app

Step 2: Select “Product Search” (or other options)

Step 3: Snap a quick picture of the product

Step 4: Amazon populates items, simply click to purchase.

The Shopper’s Perspective

Outside of Amazon, eBay, Google, and Pinterest there’s also a growing number of retailers experimenting with their own visual search technology including Target, Wayfair, and ASOS. All have—or are working on—a proprietary tool as we speak, only further proving the future of search is visual.

“I use visual product search a lot in my day to day – especially when I’m shopping inside a store,” Grütter said.

“If I find a product that I like, I’ll usually open up my Amazon app and snap a picture to see if I can find the same product for cheaper.”

“Even though I’m already at the store (with the item in front of me) – unless I’m in dire need of something, I’m usually willing to wait the extra two days to get a better price.”

“Of course, if it’s something I need right away like detergent or socks, I probably won’t bother to price match on those items,” she said.

“I’ve also had some really good experiences with American Eagle’s visual product search app. I can usually find nice basics at AE, like a long sleeve henley.”

“Now, when I come across a shirt I like instead of asking ‘Where did you buy that’ I simply snap a quick picture & American Eagle will auto pull products they already have in stock that are similar in style. If you happen to be loyal to a specific brand – this could definitely come in handy.”

“There’s so many different kinds of visual product search apps available these days but personally, I think it translates really well to Amazon’s Marketplace because a lot of shoppers are focused on finding a specific product for the best price possible.”

Q&A with Slyce: Visual Search Technology for Brands

Slyce is one of the leading visual search technology providers for big name brands including:

- JC Penny

- Express

- Home Depot

- Macys

- Nordstroms

- Best Buy

- Neiman Marcus

Their visual search technology can be used by retailers, brands & publishers to allow shoppers to transact at the “moment of inspiration”. Slyce also provides the ability to target new customers and maximize returns from existing customers.

They also offer 3D real-world product recognition with barcode and catalog scanning technologies for an all-encompassing universal scanner solution.

We spoke with Ted Mann, CEO at Slyce to learn more about how his top name clients are utilizing visual search to improve the shopping experience today.

Q. What does Slyce specialize in?

A. We see ourselves as a visual search company, with a focus on retail. Specifically, we power the camera search feature (aka the camera icon located next to the search bar within the retailer’s app).

If you tap on that icon, it will evoke the Slyce technology so you can take a picture of a product, scan it, and identify the item within a catalog.

Right now, we are integrated with about 30 retail apps and working with about 50 brands in total.

Q. What types of brands qualify for visual product search?

A. I definitely think this technology could expand to brands both big and small. Although the majority of our clients are well known, we do work with some retailers that are a little smaller, whether that be in revenue or catalog size.

The bigger brands have been quicker to adopt our technology in part because they are trying to keep pace with Amazon. Amazon has forced a lot of brands to come up with new ways to engage their consumers on mobile.

Q. What’s trending in the visual product search industry?

Visual product search is definitely growing:

For the retailers that we work with, visual product search is growing between 20% to 40% month over month.

Three years ago, when we first signed Neiman Marcus, the usage was not that significant, but now visual product search actually makes up a large chunk of the brand’s mobile search and overall ecommerce revenue.

A rise of interesting use cases

Second, I would also say there’s been an increase in the number of unique use cases and user experiences within visual search. When we first started, the most common use case was to take a picture of an item, identify it, and go to a product page (aka “snap to buy”).

But now, retailers are looking for new ways to leverage visual search in other shopping modes including:

-

- Build a Registry or List: One of the most popular features right now is building lists or a registry. Customers can use their mobile camera to walk around a store and snap multiple pictures (without having to leave the camera app) and quickly build their list of items.

-

- Custom Outfitting: Another good example is custom “outfitting” within American Eagle’s retail app. You can take a picture of any item and the app will generate an entire outfit for you – based on that product. It works really well and allows you to create multiple outfits easily.

-

- Retargeting: Brands can also leverage data from their mobile apps to retarget customers on other shopping channels. For example, if someone snaps a picture of silk floral scarf – the retail brand can use this data to retarget them at a later date with a Facebook ad.

But beyond the trends listed above, one of Slyce’s most acknowledged innovations was the launch of Runway Recognition an award winning app designed for the 2017 “Tommyland” runway event.

“We worked closely with the Tommy Hilfiger’s marketing team, to created the first-ever Runway Recognition app to enable users to purchase the looks they loved as soon as they saw them live on the runway or in a pop-up shop,” Mann said.

“Tommy challenged us to build a whole new experience. They wanted us to figure out a way to identify every single item Gigi Hadid and the other runway models were wearing.”

“Normally, our solution will only apply to the item in the foreground, but they wanted the entire look to be identifiable,” he said.

Not only did Tommyland’s innovative technology win Slyce two Clio Awards, the show was also profitable for the brand.

“The theme was ‘See now, Buy now’ so if you were a person in the crowd and saw something you liked on the runway – you could purchase it right away and didn’t have to wait 6 months for it to hit the stores.”

But what if you weren’t invited to the elite runway performance?

Slyce’s team made the technology applicable to anyone. So, even if you were sitting at home with your friends or reading about it in a magazine – you could still snap and shop items from your phone.

https://www.youtube.com/watch?v=BRFhRSSfOBk#action=share

“It was a big success for them and we were a small part of that marketing strategy being profitable.”

Shortly after, Slyce worked with Tommy Hilfiger on another exclusive shopping experience site: TommyNow Snap

The latest app (now available) allows users to snap Tommy Hilfiger looks anywhere: in-store, from ads, on the runway [live or online], and there’s even an Augmented Reality (AR) experience where you can pick the clothes you are interested in and have a virtual model try them on.

Q. Tips for brands who want to learn more about visual product search?

A. I would say if you are new to the space – the first thing you can do is familiarize yourself with what the current experiences look like.

Download any of those retailer apps mentioned above – like Nordstroms, Home Depot, Pinterest, or Amazon. Get familiar with the flow of it – because each of those solutions work a little differently.

Once you get a sense of how things work then you can start to figure out how your brand can benefit from this type of technology. Maybe you can offer your customers a way to create an outfit or style their living room?

But I definitely think the best way to start is to dive in and utilize the technology.

Q. Bottom Line: What’s the ROI on visual product search?

A. We’ve shown ROI in a variety of different ways but from a “dollars” standpoint, we’re showing about 3x ROI.

Users who engage with visual research are also about three times as sticky than those who don’t.

But there’s a number of other ways to see positive results. For some retailers it drives a lot more app downloads, for others it’s an increase in sales.

ROI with mobile is sometimes subjective, so the most important thing is to figure out which areas you looking to make the biggest impact. The way you implement visual search will be largely be guided by those goals.

To learn more about visual product search, email [email protected].

You Might Be Interested In